By Chris Chipello

What makes music so powerful? What it is about a particular moment in a concert that sends shivers down the spines of listeners?And what is going on in the brain of listeners when that magical moment occurs?

The answers to those and other questions about the mysteries of music and the mind are being teased out in laboratories across Montreal, many of them linked to two interuniversity collaborations in which McGill plays a central role: the Centre for Interdisciplinary Research in Music, Media and Technology (CIRMMT), based at the Schulich School of Music, and the International Laboratory for Brain, Music and Sound Research (BRAMS), a lab jointly affiliated with McGill and l’Université de Montréal.

The research draws from disciplines including psychology, neuroscience, computer science, music, audiology, engineering and education. And the findings could ultimately have implications well beyond a better understanding of music. Some of the insights could, for example, lead to therapies for disorders involving a breakdown in brain function.

“Montreal, with CIRMMT and BRAMS, is the world centre for our area of research,” says Hauke Egermann, a postdoctoral scholar who was drawn to CIRMMT from Germany after completing his PhD a year ago on music and emotion. “This is the biggest amount of people in one town working on music cognition, neuroscience, the brain and music technology.”

A few blocks up University St., in his office at the Montreal Neurological Institute, BRAMS founding co-director Robert Zatorre echoes that assessment. “I think if you look worldwide, there is no other city that has anything like what we have.” The array of experts in areas such as speech, music therapy, spatial-audio perception and motor learning – combined with an unusually collaborative spirit – have created a special environment here, he says.

Following is a look at some of the recent research activity at BRAMS and CIRMMT, and how the two are combining to shed light on how we perceive music, why it moves us, and what lessons we might draw from those insights.

BRAMS

BRAMS was created in 2004 by Zatorre, a leading expert in the structure and function of the auditory cortex, and Isabelle Peretz, a Université de Montréal specialist in the cognitive processes underlying music. Initially a “virtual centre,” the lab since 2007 has been housed mainly in a sprawling facility within a former convent on the UdeM campus on the flank of Mont Royal.

The installations include an all-black room without echoes, where test subjects sit inside a kind of metal dome ringed by speakers. The futuristic apparatus, nicknamed “The Array,” enables researchers to create an environment in which sound comes from all around the listener – as it does in real life. For example, they might record and replay the sounds of an actual street corner, with a person speaking behind the listener, a bus going by, cars waiting to turn. But it also gives testers the capacity to manipulate those sounds. So they might introduce a bird singing nearby, to see if the listener can detect it.

Zatorre, who joined McGill as a post-doc 29 years ago, has been among the researchers helping revolutionize the field in the past 10 or 15 years. For example, it was discovered some years ago that, in blind people, the area of the brain normally dedicated to processing visual signals responded to sounds. At first, scientists wondered whether the activity was meaningful, or just random “noise” reaching an unused area of the brain. But Zatorre’s team showed that the more activity there was, the greater the subject’s perception of sound and space. The “visual cortex” was, indeed, rewired for a different function.

“If you take a textbook from not that long ago – the 1980s – you will read that the brain is a sort of static structure. Maybe it can change during infancy, but once you’re an adult, you’re stuck with it,” he notes. “That was really the received wisdom. Certainly no one suspected that what we call visual cortex could act up with sound – or vice versa: we see the same thing in deaf people, the part of the brain that normally handles sound responds to light. Really, nobody suspected that. That’s a huge change.”

Rewired Brains

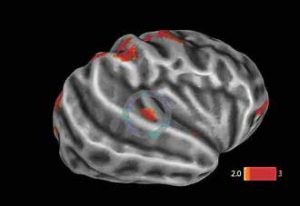

Recent advances in MRI technology and in signal processing of images make it possible to actually look at connections in the brain. Researchers can put a blind person in The Array and test how sharp their spatial hearing is – then put the same individual in an MRI scanner and examine how their brain is rewired, compared with that of a sighted person. “We try to tie those elements together and figure out how this rewiring affects their ability to perceive,” Zatorre explains.

“The point of the studies is to understand the rules by which the brain rewires itself. What are the precursors to that? Why does it rewire itself? How does that happen? And if we can figure that out, it’s like a key that would unlock a huge wealth of possibilities, because if we really knew the conditions that maximize the possibility of this kind of reorganization, we could then apply that to any disorder where rewiring would help. Brain injuries, strokes, Parkinson’s disease – any kind of disorder where you have some kind of lesion, or breakdown in brain function, in principle you might be able to work around it if you know what the rules are.”

Musicians are another interesting test population, because they also have a better sense of spatial hearing than most people. In collaboration with Schulich music technology Prof. Marcelo Wanderley, Concordia University Prof. Virginia Penhune, and Schulich PhD student Avrum Hollinger – who, like Zatorre, are members of CIRMMT – Zatorre has begun conducting experiments to figure out what goes on in the brain of a musician while he or she is performing. To this end, they have developed a metal-free keyboard that can be played inside an MRI scanner and, with input from Schulich musicians, are refining a specially designed cello for the same purpose.

“We now have some very exciting imaging tools that allow us to see the different brain structural changes,” Zatorre says. “So, for example, we can measure the thickness of the cortex by putting someone in an MRI scanner. We can also look at the connections between different cortical areas.”

Musical exposure

In another experiment using MRI technology, Zatorre’s lab had 65 people take a test in which they listened to a short tune, and then to the same tune transposed. The subjects were asked whether the two tunes were identical; their answers were scored, for 100 such melodies. “Some people do very well, some are terrible at this.” The subjects’ brains were scanned before the test; researchers then looked to see if there were areas of the brain where thicker cortex correlated with better results. The upshot: “I can tell how well you will do on this test based on how thick that spot in your brain is,” Zatorre says.

“We’re following this up now because we want to know whether this is related to how much musical exposure you had. Is it that the more training you have, the bigger this region gets, and therefore the better you do on that test? That’s one possibility. But it could also be that this is in part genetically driven, that you might be born with a slightly thicker bit of cortex in that area of the brain, and that in turn predisposes you to be better at music. And of course these two things might interact. If you have a predisposition – people say ‘a good ear,’ right; what does that mean? Well, this might be part of what it means…

“We all know some people are better at music than others. Any music teacher will tell you that. I’m sure there are many factors that go into that. But wouldn’t it be interesting if one of those factors were the way your brain is structured, because that would give us some insight into this very basic question of how much of our abilities are hard-wired, how do those abilities change as a function of our environment, and what is the interaction between them.

“That’s a huge question that applies to everything – not just to music. It applies to every aspect of human ability.”

CIRMMT

The Centre for Interdisciplinary Research in Music Media and Technology (CIRMMT, pronounced “Kermit” by insiders), posted an unusual concert notice this fall.

James Zhang, an award-winning Schulich School flute student, would perform solo works by Bach, Debussy and Varèse at Tanna Schulich Hall on Nov. 9. And the Centre was looking for a few dozen listeners willing to take part in a live, in-concert experiment using the “CIRMMT Audience Response System.”

In particular, the notice specified: “Participants should be between 18-60 years of age with no hearing problems and (for men) clean-shaven cheeks; university music students or professional musicians.

“You need to be there to get set up for the experiment at 6 p.m. (filling out questionnaires and getting hooked up), and the concert starts approximately one hour later.

“The experiment will take about 1.5 hours, and involves listening to several pieces performed live, while rating their emotional effects on you as a listener. Additionally, we will measure various psychophysiological indicators of emotion using biosensors. The performer and audience will be recorded with audio and video recording equipment.

“You will be compensated $10 upon completion. No risks are associated with this research. Your confidentiality and anonymity for serving in this experiment will be protected. This work has been reviewed by the McGill Review Ethics Board and is supervised by Prof. Stephen McAdams of the Schulich School of Music, McGill University.”

So it was that 50 people sat in Tanna Schulich Hall on a Tuesday evening in November with biosensors attached to their cheeks, foreheads and fingers, and iPod touches strapped to their thighs. The sensors were designed to enable to researchers to monitor various gauges of listener emotion: respiration rate, perspiration, heart rate and any little activations of facial muscles related to happiness or sadness (a hint of a smile, the trace of a frown.) The iPod touches, meanwhile, enabled the listeners to record their subjective reactions to the music.

In typical lab research, McAdams notes, subjects are asked to listen to snippets of music and asked questions after the fact.

“The thing that we think is most exciting is, as you’re listening to music, there’s all kinds of stuff going on, including your experience of it,” he says. “So we’re trying to probe that temporal aspect of the musical experience – these temporal dynamics of music listening.

“We focus on various aspects, and particularly how they link together.” In a piece of music involving a theme and variations, for example, “how are you in real time recognizing material that you’ve heard earlier in the piece” and how do variations affect listener’s perceptions?

Measuring expectations

Psycho-musicologists have long theorized that the listener’s mind starts to predict what will happen based on his or her lifetime experience of music. “The violation of expectations can actually play a part in creating emotional experience in music,” McAdams said. “So we’re trying to simultaneously measure these expectation processes and the emotional reaction.”

Hauke Egermann, a post-doctoral scholar working with McAdams, adds that “when your expectations are violated” as a listener, it produces “synchronized emotional responses” that can be measured. “So we see a change in subjective feeling, and also changes occur in skin conductance (perspiration), or heart rate.”

The November concert produced a mountain of data that McAdams and Egermann must now sort through, identifying moments where audience reactions were particularly noteworthy – then attempt to determine what exactly touched off the reactions. The painstaking process involves comparing videos of the audience and performer and audio recordings of the live music, carefully synchronized with the recorded biological and subjective data.

“By definition, full emotional reaction should include reactions in all those components at the same time,” Egermann says. “That’s what we’re hoping to see in the data.”

CIRMMT was formed in 2000, uniting researchers and their students from McGill, l’Université de Montréal and l’Université de Sherbrooke. The Centre’s members, including many members of BRAMS, pursue a range of interdisciplinary research related to the creation and performance of music, its recording and transmission, and the perception of music by the listener. Many of the programs were put in place under the leadership of McAdams, who served as director from 2004 to 2009.

The Virtual Haydn project, which resulted in the release last year of a set of four groundbreaking Blu-ray discs by the Naxos label, is one high-profile example of a CIRMMT collaboration combining musical scholarship, performance, production and research.

“We want to understand music in as many ways as possible,” says CIRMMT Director Sean Ferguson, “including what happens in the brain when one is listening to music. That’s a different approach than saying, ‘I want to understand the brain, and I’m feeding different stimuli into the brain to see what the brain reacts to – and one of those stimuli might be music.’ ”

Zatorre frames the tableau this way: “People at CIRMMT are really interested in what makes music so powerful. What elements in the music give people pleasure? Which chords, or which combinations of instruments? … What I work on is sort of the other side of the coin, which is ‘What’s going on in the brain that mediates that?’

“If you look at everybody working together, we’ve got a huge, broad picture, because everyone has different levels of analysis.”